It’s Wednesday evening. No specific plans, and I’m chilling on the sofa scrolling through TikTok and Instagram while Below Deck Sailing Yacht plays in the background. I’m tired and bored even though I’m not moving, and I have multiple screens trying to distract me from my boring Wednesday evening with no plans and no people available to save me from myself. It’s just me and my screens, my apps and my algorithms.

Suddenly I receive an alert from my period tracker telling me that most likely, it will come on Friday, which explains why I feel so tired and why I keep craving bread even though I’m gluten intolerant. Unsurprisingly, Instagram and TikTok know this as well. Comfy clothing ads, vegan Ben & Jerry’s, pilates against general inflammation, Beyonce’s Renaissance tour, carrot salad for hormonal balance, retinol, Margiela/Ann Demeulemeester/Rick Owens/The Row clean girl aesthetic mixed with ‘you probably have autism’ videos.

An Uber Eats pop-up reminds me that I can order Indian instead of cooking. 40-50 min away. Great, butter chicken it is.

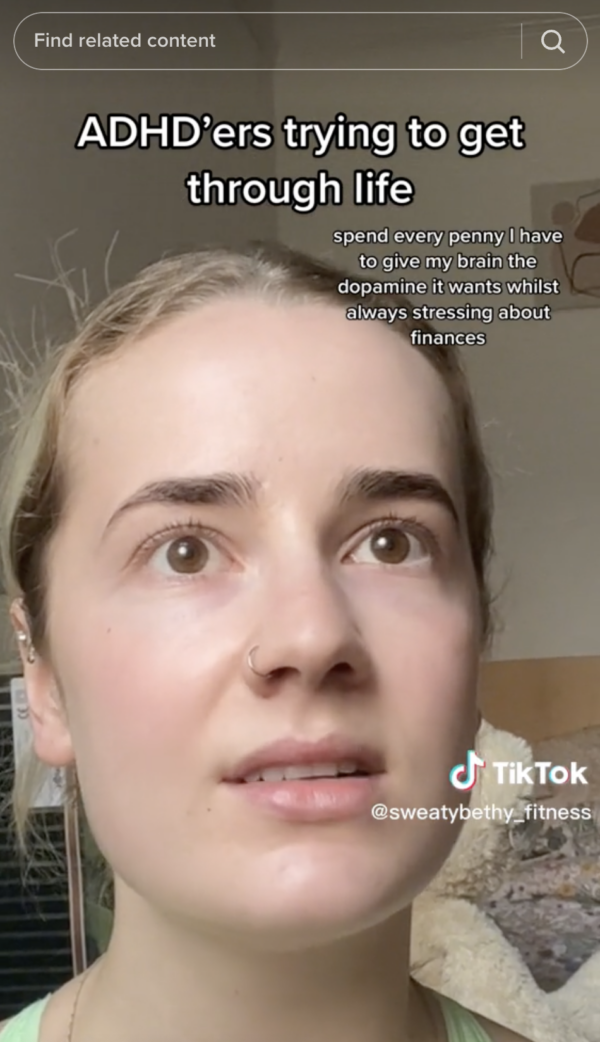

I recently heard someone talk about how there’s an epidemic of people who are self-diagnosing with Autism and ADHD. Yes, exhibiting symptoms of it, but also maybe it’s just the dopamine burnout caused by the same apps that made them self-diagnose in the first place. I wonder how much this might be true. Are these algorithms so good at analysing our behaviour that they end up reflecting them back to us in a digested 20 seconds video that allows us to identify things in ourselves that we weren’t aware of? Or are we consuming this content at such a large and quick rate that we end up becoming what they predict us to be? In other words, are we fulfilling their prophesies or do they know us better than we know ourselves?

Did I really want those Tabi ballet flats, or did the algorithm make me buy them? Do I have ADHD, or am I experiencing dopamine burnout? Am I having a style crisis because I am an evolving human being or because the algorithm keeps pushing me into the clean girl aesthetic while also wanting me to lean into the Y2K and Rick Owens vibe, but also learn how to wear a fucking hair clip correctly because that’s what the Copenhagen influenced Amsterdam girlies are into? Am I ready to move into a cabin in the woods and live my girl moss dreams or go clubbing in Berghain, pluck my eyebrows to death and bleach my hair? Is my stomach hurting because all of this is going through my head and my screens (yes, multiple) at the same time? Because you can have it all girl, you go girl, work-life balance girl, celery juice girl. Or do I have that rare, incurable undiagnosed disease the algorithm told me to google on WebMD?

Am I going blind and need glasses, or should I just listen to my mom and stare at the distance for at least 10 minutes every hour?

When I was younger, things seemed easier but also a lot more serious. Now things seem unserious and a lot more complicated. Nothing is that important anymore, but everything seems to have a thousand layers more, everything is more nuanced and complex while at the same time, stupid. I feel very old saying that. And yet I grew up in the middle of a digital revolution. I can’t remember a time there wasn’t a computer in my house. I remember being very little and playing with the Paintbrush app on my father’s Macintosh. His cellphone was the size of a brick, and you could hear the sound of the internet over the house phone. Yes, we had landlines. We had a set of CDs containing the Encyclopaedia Britannica instead of Google and Wikipedia. Facts seemed to be a lot easier to identify, and fiction was a thing left for the arts. Nobody was talking about the Pope wearing Moncler and Trump being president would have been unimaginable.

In the era of AI and misinformation, life has never been more confusing. Facts and fiction are blended seamlessly. All information seems extremely urgent and, at the same time, irrelevant. It has made sceptics out of all of us. Hyperaware that at any time, we can be deceived.

But the nature of AI has always been deceptive. Its success has always relied on its capacity to imitate, trick or replicate human language. In Alan Turing’s Computing Machinery and Intelligence, deception is placed at the centre of the test to determine a machine’s capacity to exhibit intelligent behaviour. Turing’s test proposed judging machines on their capacity to make human subjects believe they are human. So as technology advanced, AI scientists began studying the human’s reaction to the machine in order to improve its performance based on Turin’s work. And even though deception was never the main objective, creating the illusion of intelligence rather than intelligence itself became the force driving sentient-like technologies like AI. As writer and professor in Media Theory and History Simone Natale points out: “While debates have largely focused on the possibility that the pursuit of strong AI would lead to forms of consciousness similar or alternative to that of humans, where we have landed might more accurately be described as the creation of a range of technologies that provide an illusion of intelligence—in other words, the creation not of intelligent beings but of technologies that humans perceive as intelligent.”1 Turing named this ‘The Imitation Game’.

And as algorithms got better at imitating us and scientists got better at training them, we also became lazier at recognising them. Making it easier for us to fall into the illusion.

In Deceitful Media; Artificial Intelligence and Social Life after the Turing test, Natale says that “[a]t the roots of technology’s association with magic lies, in fact, its opacity. Our wonder at technological innovations often derives from our failure to understand the technical means through which they work, just as our amazement at a magician’s feat depends partly on our inability to understand the trick”. Yet in my experience, knowing does not warrant that we will not fall into the illusion. In fact, most people who enjoy magic tricks are not ignorant of how the tricks are performed, at least in their most superficial way. Magic shows still attract masses of people ready to surrender to fantasy in exchange for entertainment, aware that it is not real magic. Even more, magicians themselves are avid consumers of the trickery of their colleagues. Because deep down, we all want to be believers.

In the same way, our interactions with AI are based, as with many technologies and other systems of belief, on the projections we make in the spaces left by the illusion. We project into the machine our desire to see something that confirms our expectations. We deeply want to believe that what we want to see, hear, feel, and experience is really there.

So perhaps it is not surprising that in our loneliest or most boring moments, we turn to our machines for companionship, wanting to believe in the promise of closeness, of something that reflects back to us our deepest fears, wildest dreams and general anxieties, all repackaged in a shiny wrapper of entertainment or distraction, and the promise of taking our problems away.

AI will save the world, solve climate change, inequality, work, creativity blocks, mental health!

When ELIZA, one of the first chatbots circa 1964, was put to the test against the secretary of its programmer Joseph Weizenbaum, also known as one of the fathers of modern AI, the secretary famously asked him to leave the room since the conversation between her and the machine had turned too personal, too intimate. You see, ELIZA was programmed to emulate a non-directional psychotherapist, and Weizenbaum’s intention was to prove how communication between humans and machines was superficial. Instead, he ended up proving the opposite, sort of. The secretary ended up projecting her desire to be heard onto the machine. This is defined in psychology as when ‘inside’ content is mistaken to be coming from the ‘outside’ or the Other.

She, too, wanted to believe.

In the summer of 2022, I graduated from the Sandberg Institute, where I did a temporary master’s program called F for Fact. The program (which was extended for two more years) focused on investigating different ways of knowledge through artistic research. The blurry lines between Facts and Fiction, the way knowledge is produced. What knowledge is and what it is not.

One of the things you need to do to graduate is write a thesis, and at the time, I was not really looking forward to it. My bachelor’s thesis had left me with some PTSD, and I didn’t want to sound stupid or like I was trying too hard. So I thought it would be a great idea to ask GPT-2 (just released on early sign-up access) to write my thesis for me. I had always been fascinated by technology, and I was then in my Google Earth era and working on a project about the materiality of digital technologies and the Internet, researching transatlantic internet cable networks and lithium mines. So it seemed like a great idea to use this new technology to write my thesis for me.

But what started as a simple ‘I am too lazy and insecure, let a machine do it for me’, became an exploration of how these technologies would change the way we

create knowledge and whether knowledge could be generated. Could we outsource knowledge creation to machines? And could I ‘cheat’ my way out of the thesis? Long story short, it turns out I couldn’t. Automation was not liberation. I still needed to write it, and it probably would have been easier just to write it myself. But the process became the topic of my thesis and the object of the research itself.

AKA, I ended up writing about co-writing with AI while co-writing with AI.

Looking back on it, one of the most interesting parts of co-writing was that even though I went into the process thinking, ‘I’m not gonna fall for it’, at times, I ended up forgetting I was talking to algorithms. Turns out I also wanted to believe in the promise of a machine that could help me overcome my anxieties around writing. And it kind of did, just not in the way I was expecting it to.

What happened is that I ended up needing to be extremely precise in what I wanted to write about, or else the algorithms would take me to topics I didn’t want or need to address. Nowadays, this is really clearly exemplified by how prompt engineers are becoming more and more important when working with AI. The capacity to get what you want from the algorithms is directly linked to the quality of the prompt. AKA, what you ask is what you get, but not always what you want.

And in my case, I couldn’t get what I wanted: a quick thesis. But I got what I needed, a bunch of AIs making me realise I was not as bad of a writer as I thought I was.

In the end, the thesis became a collection of texts co-written by me and the machines: GPT-2, GPT-3, ELIZA and Replika, and a reflective text written only by me, in which I looked back on the joys and frustrations when trying to co-write with AI, the problematic things in it (biases and all) and the need to engage with them with a critical eye.

I started as a sceptic, stumbled into my own projections and beliefs, and I ended up falling in love with the glitchy parts of my dear machines, which offered digital companionship and collaboration when I most needed it.

It’s Monday evening, and I am working on this text, thinking back on another lecture I gave two weeks ago to a group of scientists from the AI department at a Dutch university. I talked about how I work with AI to co-write and collaborate on different projects like my thesis. One of them asked me if I was afraid of AI. I answered that I was afraid of what humans would do with it. Another asked me if I thought artists would be replaced by AIs and the future of human art was dead. I pointed out how with the invention of the camera, people predicted the end of painting, yet painters still paint. And in time, the camera itself became a tool for artistic production, not only documentation. It didn’t take that long before artists started experimenting with the new medium and adopting it as part of their set of tools. It will be the same with AI. Artists will always art. And technological advancements will always go through a period of adjustment before they are normalised and demystified. When the phonograph was invented, people feared it was capturing the souls of the people it recorded. When Kindles appeared, people speculated it was the end of books. And in the face of Spotify, there is a return to the LPs. So perhaps what will happen is that human-made art will be the equivalent of organic vegetables, with ‘made by a human’ stamps.

A long time ago, we dreamed and feared the end of work with the rise of machines. We dreamed of having more free time but also of losing our jobs. Instead, we are working more than ever, having less free time and inventing more jobs.

We keep hoping that making more machines will save us from the problems other machines have created. Recently I read an article in the Guardian pointing out that Big Tech people and politicians constantly mention how, through AI, they will be able to make smarter choices and solve big problems in benefit of humankind. But this is in itself quite stupid. Solving climate change doesn’t need smarter minds to create new solutions. We already know what to do; we just need people ready to put economic interests aside in order to reverse decades of damage.

As I sit on my sofa, again, trying not to fall into another TikTok hole, I realise I’m getting anxious again because I’m thinking about climate change, and that shit is scary. So I ask ChatGPT to make a joke to lighten the mood.

This is what came out:

Why did the AI start worrying about climate change? Because it realised it couldn’t CTRL+Z the planet’s rising temperature.

It also explained why it should be funny:

This joke is funny because it combines a humorous play on words with a relevant and serious topic. The punchline revolves around the computer keyboard shortcut ‘CTRL+Z,’ which is commonly used to undo or revert actions in many software programs. In this context, it is used metaphorically to suggest that the AI realised it couldn’t undo or reverse the planet’s rising temperature caused by climate change.

The joke cleverly blends technology and the environment, highlighting the AI’s recognition of a problem that cannot be easily undone or reversed. By juxtaposing the familiar computer shortcut with the pressing issue of climate change, the joke elicits a humorous response while also highlighting the seriousness and urgency of addressing environmental concerns.

I am also suddenly very aware of the irony of using a super-polluting technology to talk about climate change. And even more, to make jokes about it. According to Bloomberg, the initial training of GPT-3 consumed more energy than what the average US household consumes in three years. And I’m not even mentioning all the material resources like minerals and water and cables, and rumbas cleaning the servers, and people making sure the Roombas are emptied and plugged in, and coders working for 11 dollars per hour, and us training the models for free. The old, if you are not paying for it, you are the product.

But it is also a bit hard not to fall for something designed to mirror us and keep us entertained. We all like looking at ourselves, and the algorithms know it. We all want to see more of what we like, especially when the world seems to be ending… once more. The one thing that truly comforts me is knowing how many times humanity has predicted the end of the world throughout history. And yet here we are.

I feel guilty from time to time because I am using technologies that I know are bad for me and the environment. But I also make a point to try to use them critically and put my tiny seed of resistance. Clicking ‘no’ to all cookies. Deleting my apps now and then. Using alternative platforms and programs. Blocking all ads. Going out for a walk instead of staring at my phone. Helping an old lady cross the street and carry her groceries. I remember Michelle Young’s words: “Power is relative. No one of us can bring about change by ourselves. But for each of us, our part is vital.”

I’ll try listening more to my mom and stare out the window for ten minutes now and then.

To not WebMD my symptoms. To not buy the next thing TikTok tells me to buy, because it won’t solve all my problems.

I’ll also try not to feel so guilty and do more. To acknowledge that our relationship with technology is very intimate and intricate, but also problematic. Like a codependent relationship. Maybe we should all go to therapy.

But also, like @ummsimonee said: “If you speak what you want into existence, at the very least, the Instagram algorithm will hear you.”

And my personal favourite: “Nobody knows me like the Notes app does.”

Thank y’all.

This text is an adaptation of Mariana Fernández Mora’s lecture at Spui25 during our Co-Creation with AI event.

References

| ↥1 | Natale, Simone. (2021). Deceitful Media: Artificial Intelligence and Social Life after the Turing Test, 3 10.1093/oso/9780190080365.001.0001. |